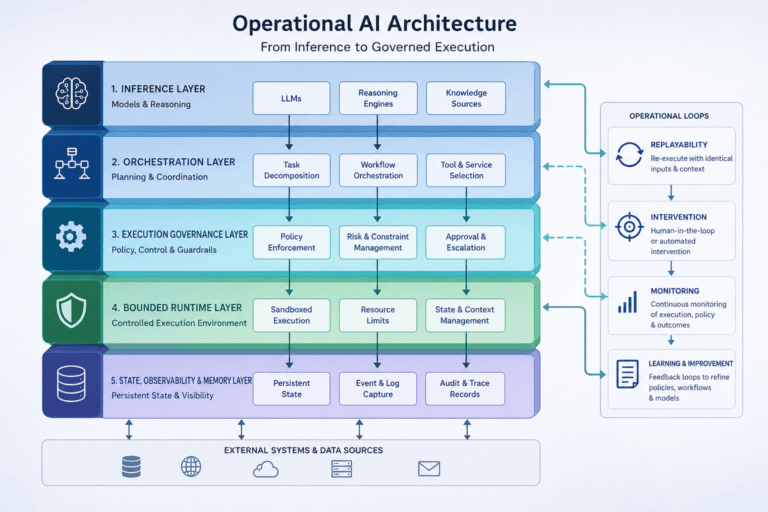

The Execution Gap in AI

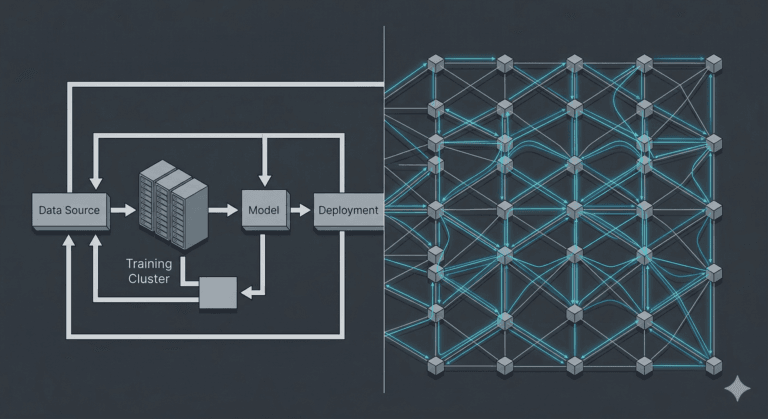

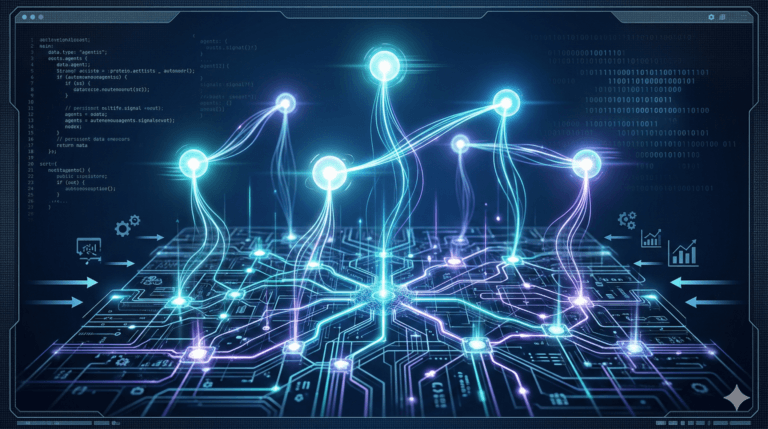

The execution gap in AI is the structural gap between generating intelligent decisions and governing execution behaviour in real-world operational systems. As AI systems become more persistent, autonomous, and infrastructure-coupled, runtime governance, bounded autonomy, replayability, intervention capability, and operational trust become increasingly important infrastructure layers. This article explains why inference alone is insufficient for operational intelligence, why observability does not equal control, and why governed execution may become a defining architectural requirement for operational AI systems deployed into industrial, robotic, energy, and infrastructure environments.