Recent experiments demonstrating neural cultures learning to interact with a virtual environment highlight an architectural property often overlooked in enterprise AI discussions: continuous adaptation within a persistent substrate. Most enterprise AI systems operate using model-centric architectures that separate training from execution. When deployed models encounter shifting real-world conditions, performance degradation requires retraining and redeployment. This pattern introduces operational complexity, validation overhead, and recurring infrastructure costs. Substrate-based architectures integrate learning and execution within a persistent runtime environment where adaptation occurs through bounded modulation rather than periodic structural reset. The Doom-on-a-chip experiment illustrates this distinction at a biological level. For enterprise AI architects, the relevant question is not whether biological neurons outperform silicon systems but whether current model-centric architectures are structurally suited for long-running intelligent infrastructure.

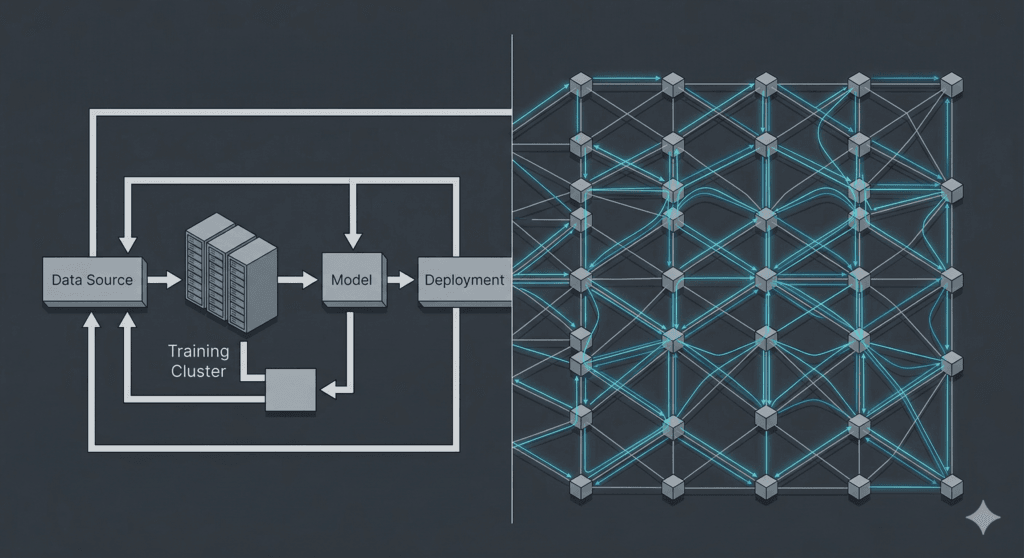

Why does enterprise AI require retraining?

Most enterprise AI systems separate training from execution. When deployed models encounter data that differs from their training distribution, performance degrades. Restoring accuracy requires retraining the model and redeploying it. This architectural separation creates recurring maintenance cycles that increase cost and operational complexity.

Persistent Intelligent Substrate

A persistent intelligent substrate is a computational environment where learning and execution occur simultaneously during runtime. Instead of separating training from deployment, the system maintains internal state and adapts continuously through bounded modulation while remaining operational.

Enterprise AI architecture determines how intelligent systems behave in production environments. Most current deployments rely on model-centric architectures in which training and execution are separated. This separation introduces a structural dependency on retraining cycles when real-world conditions change. As organisations expand AI deployments into long-running operational systems, the limitations of this architecture become increasingly visible. The Doom-on-a-chip experiment demonstrates an alternative property: continuous adaptation within a persistent substrate. While the experiment used biological neurons, the architectural insight applies more broadly. For enterprise AI systems expected to operate continuously, the distinction between model-centric retraining and substrate-based adaptation becomes a central design consideration.

Why the Doom-on-a-Chip Experiment Matters

Reports that cultured human neurons learned to play Doom attracted attention because of the unusual pairing of biology and a video game environment. The important point for enterprise AI architecture is not the biological novelty. The important point is the mechanism of adaptation observed in the experiment.

In the study, neurons grown on a microelectrode array were connected to a simulated environment. Electrical stimulation represented environmental inputs. Neural firing patterns were interpreted as actions within that environment. Over repeated interactions, the activity patterns of the neural culture changed in ways that improved performance in the task. The system adapted while operating. There was no separate training phase that produced a frozen model to be deployed later.

This distinction is structural. Most enterprise AI systems follow a different lifecycle. A model is trained offline using historical data, deployed into production, and later retrained when performance declines. This pattern exists because learning and execution occur in separate phases. When real-world inputs diverge from the training distribution, the deployed model cannot adapt internally. Retraining becomes the repair mechanism.

The Doom-on-a-chip experiment demonstrates a system where learning and execution occur within the same runtime environment. Adaptation occurs through changes in internal state during operation. This does not prove that biological neurons outperform engineered systems. It does show that continuous interaction between system state and environment can produce behavioural improvement without periodic structural resets.

Consider a practical scenario. An enterprise fraud detection system trained on historical transaction data is deployed in production. Fraud patterns change as attackers adapt. The model’s detection accuracy declines. The organisation collects new data, retrains the model, validates the update, and redeploys it. During this cycle the system either operates with degraded performance or introduces risk through model updates.

The experiment suggests a different architectural possibility. A system embedded in a persistent execution environment can adapt its behaviour during operation rather than waiting for retraining cycles. The observation does not prescribe a specific engineering implementation. It does identify a structural property of adaptive systems that enterprise AI architectures must confront.

The Dominant Model-Centric AI Architecture

Most enterprise AI systems are built around a model-centric architecture. In this design, learning occurs during a training phase that precedes deployment. The model is optimised using historical data, after which its parameters are fixed and deployed into production. During operation, the model performs inference but does not change its internal structure. Any improvement in behaviour requires a new training cycle.

This separation between training and execution creates a predictable lifecycle. A system is trained, validated, deployed, monitored, and eventually retrained when performance declines. The retraining process produces a new model version that must again pass validation before deployment. Each iteration introduces operational overhead and potential instability.

The mechanism that triggers retraining is usually distribution shift. When the statistical properties of real-world inputs change, a model trained on historical data may no longer produce reliable predictions. This condition is commonly referred to as model drift. The architecture itself does not contain a mechanism for internal adaptation during runtime. Retraining becomes the primary repair mechanism.

A common example appears in recommendation systems used by digital commerce platforms. A model may be trained to predict which products a user is likely to purchase based on historical browsing and purchase behaviour. Once deployed, the model evaluates new interactions and generates recommendations. If customer behaviour changes due to seasonal demand, market trends, or product catalogue updates, prediction quality may degrade. The organisation must collect new behavioural data and retrain the model to restore performance.

This architecture has practical advantages. Offline training allows engineers to use large datasets and optimisation techniques that are computationally intensive. It also allows organisations to evaluate models under controlled conditions before deployment. These properties explain why the model-centric approach became the dominant design pattern in modern AI systems.

However, the architecture imposes a constraint. Because learning occurs outside the runtime environment, deployed systems cannot adapt internally when conditions change. Adaptation requires retraining and redeployment. The retraining loop is therefore not simply an operational habit. It is a direct consequence of the architecture itself.

The Mechanism of AI Drift

AI drift occurs when the statistical relationship between inputs and outputs in a deployed system diverges from the relationship present in the data used during training. A model trained on historical observations encodes patterns from that dataset. When the environment changes, the assumptions embedded in the model may no longer hold. Performance declines because the model continues to apply patterns that no longer reflect the current system state.

The mechanism can be described directly. A model learns a mapping between input variables and predicted outcomes. This mapping is derived from the training distribution. When the production environment produces inputs that differ from that distribution, the model receives signals that it has not been optimised to interpret. The model continues to produce outputs, but the accuracy of those outputs degrades.

Two common forms of drift illustrate the problem. The first is input distribution drift. This occurs when the characteristics of incoming data change. The second is concept drift. This occurs when the relationship between inputs and outcomes changes even if the inputs themselves remain similar. Both conditions disrupt the assumptions learned during training.

Consider a credit risk model trained using historical lending data. The model learns patterns linking borrower characteristics to default outcomes. If economic conditions change or new lending products alter borrower behaviour, the statistical relationship between those characteristics and default risk may shift. The model still produces predictions, but those predictions are based on patterns that no longer match the real environment. Performance must be evaluated again, and the system is typically retrained using newer data.

This process is observable and falsifiable. If the distribution of input features in production deviates from the distribution present in the training dataset, prediction error rates increase. Monitoring systems often track these distribution shifts directly. When the shift crosses predefined thresholds, retraining is triggered.

The architectural implication is straightforward. In a model-centric system, adaptation does not occur during runtime. The model cannot alter its internal parameters in response to new information while operating. Instead, new data must be collected and processed in a separate training pipeline. The resulting model replaces the previous one.

Drift therefore exposes the structural dependency between model-based AI systems and retraining cycles.

Operational Consequences of Retraining

Retraining is often treated as a routine maintenance step in enterprise AI systems. In practice, it introduces a series of operational constraints that follow directly from the model-centric architecture described earlier. When learning occurs outside the runtime environment, any adaptation requires rebuilding the model through a separate training process. This separation creates repeated operational cycles that affect reliability, cost, and deployment risk.

The first consequence is validation overhead. A retrained model cannot be deployed immediately after training. It must be evaluated against test datasets, benchmarked against previous versions, and assessed for unexpected behaviour. Organisations operating in regulated environments often require additional review before a new model version is approved. Each retraining cycle therefore triggers a full evaluation workflow.

A second consequence is deployment instability. When a new model replaces a previous version, behaviour may change in ways that are not immediately obvious. Even when performance metrics improve on validation datasets, production behaviour may differ due to interactions with real-world inputs. Engineers often deploy new models gradually to detect unexpected outcomes before full rollout.

Consider a transaction fraud detection system used by a payment processor. The system monitors incoming transactions and assigns a risk score. If the model begins missing new fraud patterns, engineers collect updated transaction data and retrain the model. The new version must then be validated against historical fraud cases, evaluated for false positives, and tested in a controlled environment before deployment. During this process the system continues operating with a model that may already be partially outdated.

Retraining also creates infrastructure demands. Training pipelines require storage for large datasets, compute resources for optimisation, and engineering time to manage data preparation and model evaluation. These resources must be maintained even when the system itself is already deployed and operational.

These consequences are not implementation mistakes. They arise from the architectural choice to separate training from execution. If learning occurs only during retraining, then operational stability depends on the ability to repeatedly rebuild and redeploy models as conditions change.

Persistent Intelligent Substrates

A persistent intelligent substrate is a runtime environment in which learning and execution occur simultaneously. The system maintains internal state across time and can adjust its behaviour during operation rather than relying on periodic retraining. The defining property is persistence. The system continues to operate while adaptation occurs within the same execution environment.

In this architecture, state changes occur through internal mechanisms triggered by interaction with the environment. Inputs alter the system state. State changes influence subsequent outputs. Feedback from those outputs alters future state transitions. Adaptation emerges from these feedback loops rather than from periodic model replacement.

The difference can be described in operational terms. In a model-centric system, the deployed model performs inference but does not change its internal structure. Learning occurs outside the runtime environment through a separate training pipeline. In a persistent substrate, state transitions and adaptation occur during execution. The runtime system itself contains the mechanisms required for adjustment.

A practical scenario illustrates the distinction. Consider an industrial monitoring system that detects abnormal equipment behaviour from sensor data. In a model-centric design, engineers train a model using historical sensor readings. If equipment behaviour evolves due to wear or environmental changes, the model must be retrained with new data. During this process, the system relies on the existing model until a replacement is validated and deployed.

In a persistent substrate architecture, the monitoring system maintains internal state that evolves with incoming sensor signals. Feedback signals modify how future sensor patterns are interpreted. Adaptation occurs incrementally within the running system rather than through periodic retraining cycles.

This architecture does not remove the need for engineering oversight or structural updates. It does change where adaptation occurs. Instead of rebuilding the system repeatedly through external training pipelines, adaptation becomes part of the runtime mechanism. The system remains operational while adjusting to environmental change.

Runtime Governance

Runtime governance refers to mechanisms embedded within an intelligent system that monitor behaviour during execution and apply bounded adjustments when instability is detected. Unlike external policy layers or post hoc auditing, runtime governance operates within the execution environment itself. Its function is to observe system state, detect patterns that indicate undesirable behaviour, and trigger controlled responses that preserve operational stability.

In a model-centric architecture, governance typically occurs outside the model. Organisations deploy monitoring dashboards, rule-based filters, or manual review processes to detect problematic outputs. These mechanisms react after behaviour has already been produced. If the behaviour is consistently incorrect, the typical response is retraining. Governance therefore becomes reactive rather than structural.

Runtime governance changes where control is applied. The governance layer observes internal state transitions while the system is operating. When predefined conditions occur, the system can alter internal parameters, adjust signal pathways, or temporarily constrain certain behaviours. The adjustment occurs during execution rather than through external retraining pipelines.

A concrete scenario illustrates this mechanism. Consider an autonomous warehouse system that routes robots through aisles to collect inventory. The navigation system may learn routing patterns based on traffic flow within the warehouse. If the system begins repeatedly selecting routes that lead to congestion, a runtime governance layer can detect repeated oscillations in routing decisions. Instead of retraining the navigation model, the governance mechanism can alter internal weighting or exploration behaviour so that alternative routes are tested during subsequent operations.

The critical property is that governance operates through defined triggers and bounded responses. Engineers specify conditions that indicate instability or inefficiency. The system then modifies behaviour through internal state changes rather than by replacing the entire model.

This mechanism can be evaluated empirically. If governance rules are well defined and observable, system behaviour should remain reproducible under the same input conditions. If the governance layer alters behaviour beyond those defined conditions, engineers can detect the deviation through inspection of execution traces. Runtime governance therefore provides a mechanism for maintaining operational stability while allowing systems to adapt during execution.

Biological Substrates vs Engineered Substrates

The Doom-on-a-chip experiment used a biological substrate. Neurons grown on a microelectrode array interacted with a simulated environment and altered their activity patterns through repeated exposure to feedback signals. The relevance of this result does not depend on whether biological neurons are suitable for enterprise systems. The relevance lies in the architectural properties that the experiment demonstrates.

A biological neural culture operates as a persistent substrate. Electrical activity changes the state of neurons. Those state changes alter future responses to stimulation. Feedback from the environment modifies subsequent activity patterns. Learning therefore occurs during execution rather than through a separate training process.

Engineered substrates can implement similar architectural principles using different mechanisms. Instead of biological ion channels and synapses, a software system maintains internal state variables and update rules. Input signals trigger state transitions. State transitions influence subsequent outputs. Feedback signals modify how future inputs are interpreted. The architectural property is persistence of state and continuous adaptation within the runtime environment.

The difference between biological and engineered substrates lies primarily in controllability and observability. Biological systems adapt through biochemical processes that are difficult to instrument at fine granularity. Engineered systems can expose internal state variables, execution traces, and update rules for inspection. This transparency allows engineers to monitor how behaviour evolves over time and to define governance mechanisms that constrain adaptation.

A concrete scenario illustrates the distinction. In the neural culture experiment, researchers could stimulate the culture and observe aggregate firing patterns across electrodes. They could measure improvement in task performance, but they could not fully inspect the internal biological mechanisms that produced those changes. In contrast, a software system built on an engineered substrate can record every state transition, every input signal, and every adjustment applied by the governance layer. Engineers can replay the execution sequence and verify whether the same inputs produce the same behaviour.

The architectural lesson is not that biological neurons should be used directly in enterprise systems. The lesson is that persistent substrates capable of adapting during execution can be implemented in both biological and engineered forms.

Deterministic Execution and Replayability

Deterministic execution means that a system produces the same output when given identical inputs and an identical internal starting state. Replayability means that the sequence of state transitions that produced a decision can be reproduced and inspected after the event. These properties allow engineers and auditors to verify how a system reached a specific outcome.

Many deployed AI systems do not guarantee full replayability. A model may produce a prediction, but the surrounding execution context that influenced the decision may not be preserved. If input preprocessing steps, feature transformations, or intermediate state changes are not recorded, engineers cannot reconstruct the exact conditions under which the decision occurred.

A persistent substrate architecture allows deterministic replay when two conditions are met. First, the execution engine must process events in a defined order. Second, the system must record the internal state transitions that occur as inputs are processed. When these conditions hold, replaying the same inputs from the same initial state reproduces the same sequence of transitions and the same output.

Consider a credit decision system evaluating a loan application. The system receives inputs such as transaction history and income records and produces an approval or rejection decision. If the system records the input data, the initial state of the execution environment, and each state transition triggered by those inputs, engineers can replay the evaluation later. Running the same inputs through the deterministic execution engine produces the same decision and the same intermediate state transitions. An auditor can then inspect the exact path that led to the outcome.

This capability addresses a structural limitation of model-centric architectures described in the thesis. When learning and execution are separated, behaviour changes through retraining and redeployment. Each new model version introduces a new decision boundary that cannot be reconstructed from earlier runtime events alone. In contrast, a persistent substrate with deterministic execution allows behaviour to evolve through controlled state changes while preserving the ability to replay and inspect decisions that occurred during operation.

Economic Implications for Enterprise AI

The architecture of an AI system determines where operational costs occur. In model-centric architectures, adaptation requires retraining. Each retraining cycle requires data collection, feature preparation, compute resources, and validation before deployment. These steps are repeated whenever model performance declines.

This pattern follows directly from separating training from execution. A deployed model cannot change its internal parameters during operation. When input distributions change, the only mechanism available to restore performance is retraining the model outside the runtime system and deploying a new version.

A concrete example appears in recommendation systems used by retail platforms. A model trained on historical browsing behaviour generates product recommendations for users. When new products are introduced or customer purchasing patterns change, the model may continue recommending items that are no longer relevant. Engineers collect updated interaction data and retrain the model to incorporate these changes. The retrained model must then pass evaluation tests before it replaces the previous version in production. The recommendation system therefore depends on repeated retraining cycles to maintain accuracy.

This architecture produces a measurable operational cost structure. Organisations must maintain data pipelines, training infrastructure, and engineering workflows capable of rebuilding models repeatedly. If behavioural change in the environment occurs faster than retraining cycles can respond, system performance declines between updates.

The thesis of this article states that retraining dependency arises from separating learning from execution. Persistent intelligent substrates alter this cost structure by moving adaptation into the runtime environment. Instead of rebuilding models through external pipelines, the system adjusts behaviour through controlled state transitions during operation. The economic consequence is testable. If adaptation occurs inside the execution environment, the frequency of external retraining cycles should decrease.

The economic question for enterprise AI is therefore architectural. Systems built on model-centric designs allocate resources to repeated reconstruction of models. Systems built on persistent substrates allocate resources to operating and governing adaptive runtime environments.

Production Stability and Regulatory Pressure

Enterprise AI systems increasingly operate under regulatory requirements that demand decision traceability and reproducibility. In sectors such as finance and healthcare, organisations must be able to explain how automated decisions were produced and must retain evidence that allows those decisions to be reconstructed.

Model-centric AI architectures complicate this requirement. When model performance declines, organisations retrain and redeploy updated versions. Each redeployment alters the mapping between inputs and outputs. If a decision was produced by model version A and the system later deploys version B, the decision boundary used at the time of the original decision no longer exists in the live system.

A concrete example occurs in credit risk assessment. A bank may deploy a model that evaluates loan applications using signals such as income records and transaction history. Regulators can require the bank to explain why a specific application was rejected. If the decision occurred under a previous model version, the institution must retain that exact model, the associated feature pipeline, and the data transformations used during evaluation. If any component of that environment is missing, the bank cannot reproduce the decision.

This requirement creates operational overhead. Organisations must archive historical models, store training artefacts, and maintain infrastructure capable of reconstructing prior execution environments. Without these controls, institutions cannot guarantee reproducibility of earlier decisions.

The thesis of this article states that retraining dependency arises from separating learning from execution. Persistent intelligent substrates provide a different mechanism for behavioural change. Instead of replacing models through retraining, behaviour evolves through internal state transitions within a stable execution environment. If the runtime engine is deterministic and state transitions are recorded, engineers can replay the exact sequence of events that produced a decision.

This architecture addresses a regulatory requirement directly. A decision can be reconstructed by replaying the recorded state and input conditions that existed at the time the decision occurred.

Architectural Trade-offs

Persistent intelligent substrates change where adaptation occurs in an AI system, but they introduce different engineering constraints. In model-centric architectures, most complexity is concentrated in the training pipeline. Engineers construct datasets, optimise model parameters, and validate the resulting model before deployment. Once deployed, the system executes inference without modifying its internal parameters. Adaptation occurs only when engineers retrain and redeploy a new model version.

A persistent substrate shifts this mechanism. Learning occurs during execution through state transitions triggered by incoming signals and feedback loops. The system therefore modifies behaviour while it is operating. This architectural shift requires engineers to design explicit rules governing how internal state can change. If those rules are absent or poorly defined, runtime adaptation may produce unstable behaviour.

A concrete example appears in predictive maintenance systems used in industrial facilities. In a model-centric design, engineers train a model on historical sensor data that captures patterns preceding equipment failure. The deployed model evaluates live telemetry and produces failure predictions. If machine behaviour changes due to maintenance procedures or operating conditions, engineers retrain the model using updated data and redeploy it.

In a persistent substrate design, the monitoring system maintains internal state that evolves as telemetry signals arrive. Changes in vibration or temperature signals can alter the system’s interpretation of future inputs without retraining a model. This mechanism allows the system to adapt incrementally during operation. However, engineers must implement governance rules that constrain how state transitions occur. Without those constraints, small adjustments could accumulate and alter behaviour in unintended ways.

The thesis of this article states that retraining dependency arises from separating learning from execution. Persistent substrates remove this separation by embedding adaptation within the runtime system. The trade-off is that verification moves from validating a static model to validating the rules that govern state transitions and runtime adaptation.

A New Direction for Enterprise AI Architecture

Enterprise AI architecture is beginning to confront a structural limitation. Systems that depend on periodic retraining struggle to maintain stability when the environment changes continuously. When learning is separated from execution, the system can only adapt by rebuilding the model and redeploying it. This architecture treats learning as a maintenance activity rather than as part of system operation.

The thesis of this article is that retraining dependency arises from this separation. If learning occurs outside the runtime environment, adaptation requires repeated reconstruction of the model. Each reconstruction introduces a new decision boundary and a new operational state that must be validated and governed.

A different architectural direction is possible. Instead of treating intelligence as a model that is periodically rebuilt, the system can be designed as a persistent execution substrate. In this design, signals from the environment trigger state transitions within the system. Feedback from outcomes modifies how future signals are processed. Learning therefore occurs as part of runtime execution.

A concrete example can be seen in logistics optimisation. A distribution network routes deliveries across a fleet of vehicles. In a model-centric system, engineers train a routing model using historical traffic patterns and delivery data. When traffic patterns change due to infrastructure updates or seasonal demand, the model must be retrained to incorporate the new conditions.

In a persistent substrate architecture, routing behaviour can adjust through continuous feedback from travel times and route congestion signals. When a particular routing pattern repeatedly produces delays, the system can modify its internal state so that alternative paths are explored in subsequent operations. The system adapts through state transitions rather than through external retraining.

This architectural shift can be evaluated empirically. If learning and execution occur in the same runtime environment, behavioural adaptation should occur without rebuilding the underlying system. If the system still requires frequent retraining cycles to remain effective, then the architecture has not eliminated the separation between learning and execution.

Core Concepts

- Enterprise AI architecture

The structural design of AI systems used in production environments. - AI drift

Performance degradation caused by changes in real-world input distributions. - Retraining cycle

The process of updating a deployed model to restore performance. - Persistent intelligent substrate

A runtime environment where learning and execution occur simultaneously. - Runtime governance

Mechanisms that monitor and modulate system behaviour during execution. - Deterministic execution

The ability to reproduce identical behaviour given identical inputs.

Key Takeaways

- Most enterprise AI systems depend on retraining cycles.

- Retraining arises from separating training and execution.

- Continuous substrates integrate adaptation with runtime behaviour.

- Runtime governance enables bounded adaptation.

- Deterministic execution improves auditability and reproducibility.

- Persistent intelligent systems may reduce operational maintenance cycles.

- The Doom-on-a-chip experiment illustrates architectural principles rather than biological superiority.

Key Questions

Why do enterprise AI systems drift?

Because deployed models encounter data distributions that differ from training data.

What causes retraining dependency?

The architectural separation between training and execution.

What is a persistent intelligent substrate?

A runtime system that integrates learning and execution continuously.

Does substrate-based AI eliminate retraining entirely?

No. It reduces reliance on retraining by allowing bounded runtime adaptation.

Why does deterministic execution matter?

It enables reproducibility and auditability in production systems.

References

Kagan, B. J., et al. “In vitro neurons learn and exhibit sentience when embodied in a simulated game-world.” bioRxiv (2021). https://www.biorxiv.org/content/10.1101/2021.12.02.471005v2biorxiv

Sutton, R. S., & Barto, A. G. Reinforcement Learning: An Introduction. MIT Press. http://incompleteideas.net/book/the-book.htmldiviextended

Amodei, D., et al. “Concrete Problems in AI Safety.” arXiv (2016). https://arxiv.org/abs/1606.06565summarizepaper

European Union. AI Act – Article 11: Technical documentation. https://ai-act-service-desk.ec.europa.eu/en/ai-act/article-11ai-act-service-desk.europa

Friston, K. J., et al. “Active Inference: A Process Theory.” https://activeinference.github.io/papers/process_theory.pdfactiveinference.github

Hinton, G., et al. “Deep Neural Networks for Acoustic Modeling in Speech Recognition.” https://www.cs.toronto.edu/~hinton/absps/DNN-2012-proof.pdfresearch.google+1

IBM. AI Governance Frameworks and Tools. https://www.ibm.com/solutions/ai-governanceibm

MIT CSAIL. Guided learning lets “untrainable” neural networks realize their potential. https://news.mit.edu/2025/guided-learning-lets-untrainable-neural-networks-realize-their-potential-1218news.mit