Why not all “post-AI” systems are aiming for the same thing

Synthetic Intelligence is often discussed as if it were one idea moving in one direction. In reality, it has already begun to split into distinct approaches, each based on a different belief about where intelligence actually comes from.

Our earlier explainer introduced Synthetic Intelligence as a discipline and outlined why it is emerging now. This article goes a step further. It looks at the different paths forming within Synthetic Intelligence itself, and why those paths lead to very different systems over time.

Some approaches treat intelligence as a problem of cognition, knowledge, and reasoning. Others treat it as a property of the system’s structure, shaped by memory, time, and constraint. On the surface, these approaches can look similar. Underneath, they make very different assumptions about what intelligence is and how it should be built.

This piece is not a comparison of products or a ranking of ideas. It is an attempt to clarify the landscape, explain why these differences matter, and show how they shape the future of intelligent systems.

If Synthetic Intelligence is to mature as a field, understanding these foundations is more important than tracking short-term capability gains. That is the perspective this article takes.

Why “Synthetic Intelligence” Exists at All

Synthetic Intelligence did not appear because artificial intelligence suddenly became impressive. It appeared because the limits of today’s AI became increasingly hard to ignore.

For years, progress in AI followed a simple pattern. Models got bigger. Data sets grew. Compute budgets expanded. This delivered striking results, particularly in language and perception. But it also exposed deeper problems that scale alone could not fix. These are not tuning issues or missing features. They are structural.

Modern AI systems are very good at producing outputs that look intelligent. They are far less reliable when asked to behave intelligently over time, across changing conditions, or without constant supervision. Systems fail outside their training context. Memory is shallow or bolted on. Consistency breaks down. Energy costs rise sharply for relatively small gains. More effort is spent explaining behaviour after the fact than shaping it upfront.

What matters most is that these problems do not disappear as models get larger. In many cases, they become more pronounced. This has led many people working close to the technology to a quiet but important realisation. Intelligence does not automatically increase just because models do.

Scaling worked because it postponed deeper questions. As long as larger models kept improving benchmarks, it was easy to assume that scale itself was the strategy. But scaling also created a dependency. Intelligence became something that existed only at inference time. Memory was treated as an external attachment. Behaviour was recalculated again and again, rather than carried forward.

That approach works in short bursts. It works far less well for systems expected to run continuously, adapt gradually, and remain stable over long periods. At that point, the question changes. It is no longer about how large a model can be. It becomes a question of what kind of system could remain intelligent without having to constantly recompute everything.

This is where Synthetic Intelligence begins to take shape. Rather than pushing existing AI systems harder, it steps back and asks more basic questions. What needs to exist before intelligence can emerge at all? What role do memory and time actually play? Can intelligence be something a system has, rather than something it repeatedly recreates?

The word “synthetic” is deliberate. It does not simply mean artificial. It means engineered with intent, from first principles, with intelligence treated as a property of the system itself rather than a side effect of a model.

This conversation is happening now for practical reasons. The limits of scaling are becoming visible in energy use, cost, and diminishing returns. Expectations are changing as systems are deployed for longer periods in real environments with real consequences. At the same time, there is a growing willingness to question assumptions that once felt settled. Ideas around dynamics, memory, persistence, and emergence are being revisited, not as theory, but as engineering necessities.

Synthetic Intelligence is not a rejection of AI. It is an acknowledgement that intelligence may not live where we first assumed it did.

From this starting point, two broad directions begin to emerge. One treats intelligence mainly as a matter of knowledge, reasoning, and understanding. The other treats intelligence as something that arises from how a system is built and constrained. Understanding these two paths, and why they lead to very different outcomes, is key to understanding where Synthetic Intelligence is heading.

Defining Synthetic Intelligence

Before comparing approaches, it is important to be clear about what Synthetic Intelligence actually means. Much of the confusion in this space comes from the term being used loosely, or as a rebrand for existing AI techniques.

Synthetic Intelligence is not a single technology, model, or architecture. It is a way of thinking about how intelligence is created.

At its core, Synthetic Intelligence treats intelligence as something that is engineered, not trained into existence by scale alone. The focus shifts away from outputs and benchmarks and towards the conditions that allow intelligent behaviour to arise, persist, and remain stable over time.

This makes Synthetic Intelligence fundamentally different from most contemporary AI systems, which are centred on statistical inference and pattern matching.

Synthetic Intelligence starts from a different question. Instead of asking how a system can produce intelligent-looking responses, it asks what kind of system could behave intelligently as a result of how it is constructed.

That distinction matters.

In practical terms, Synthetic Intelligence systems tend to share several characteristics. They treat memory as intrinsic rather than external. They take time seriously, meaning behaviour unfolds across state and history rather than being recalculated from scratch. They emphasise constraints and dynamics, allowing behaviour to emerge from the interaction of system components rather than being explicitly programmed or inferred at every step.

Just as importantly, Synthetic Intelligence does not assume that intelligence must look human. It does not require language, symbols, or explicit reasoning to be present from the start. Intelligence is defined by behaviour, adaptation, and stability, not by resemblance.

This also means that Synthetic Intelligence is not the same thing as artificial general intelligence. AGI is usually framed as a destination, often defined by human-level capability across tasks. Synthetic Intelligence is a discipline. It is concerned with how intelligence is built, not how impressive it appears at any given moment.

It is also not simply neuromorphic computing, cognitive architecture design, or agent systems, although it may overlap with all of these. Those are techniques or domains. Synthetic Intelligence is the broader engineering mindset that asks how those pieces fit together at the system level.

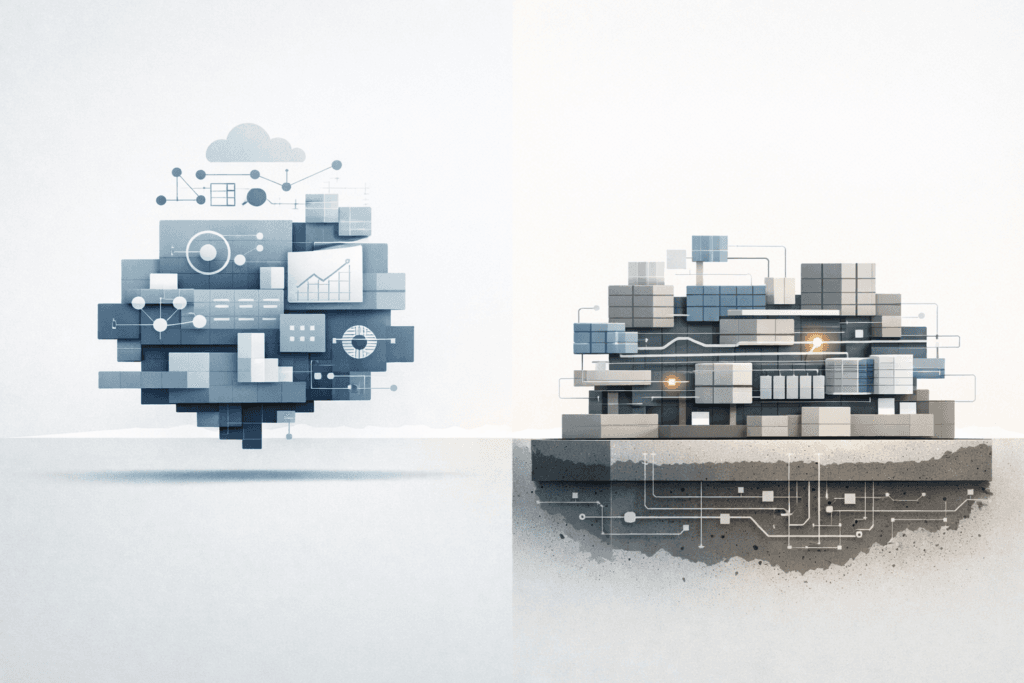

A useful way to think about it is this. Traditional AI focuses on models that perform intelligence. Synthetic Intelligence focuses on systems that possess intelligence as a result of their structure.

Once that distinction is clear, it becomes easier to see why different approaches are emerging within Synthetic Intelligence itself. Some focus on cognition and internal understanding. Others focus on substrates, dynamics, and emergence. Both sit under the same umbrella, but they start from very different assumptions.

Those differences are where the real divergence begins.

A Taxonomy of Synthetic Intelligence Approaches

Once Synthetic Intelligence is defined as a discipline rather than a single technique, it becomes clear that it is not moving in one direction. Different groups are approaching the problem from different starting points, based on where they believe intelligence actually comes from.

These approaches often use similar language and sometimes similar tools, which can make them appear closer than they really are. Under the surface, however, they are built on very different assumptions.

Broadly speaking, three patterns are emerging.

Some efforts extend existing AI systems by adding structure, tools, or layers of control. Others draw inspiration from biology but remain tied to model-centric thinking. A smaller set starts with the system itself and treats intelligence as something that emerges from how that system is constructed.

Understanding these distinctions helps explain why progress in this field can feel uneven, and why results that look similar on the surface may behave very differently in practise.

Model-centric extensions

The most familiar path builds directly on today’s AI models. These systems extend large models with tools, memory stores, agents, or planning layers. The core assumption is that intelligence already exists in the model, and that the right scaffolding will allow it to behave more reliably or autonomously.

This approach has produced rapid, visible progress. Systems can plan tasks, call tools, coordinate actions, and appear more capable than standalone models. In many cases, these techniques are useful and commercially valuable.

However, the centre of gravity does not change. Intelligence still lives inside the model. Memory remains external. Behaviour is still recomputed continuously. When things fail, they fail in ways that are difficult to predict or constrain, because the underlying system has not fundamentally changed.

These approaches stretch existing AI further, but they do not redefine what intelligence is or where it comes from.

Bio-inspired but model-bound systems

A second group takes inspiration from biology. This includes spiking neural networks, neuromorphic hardware, and event-driven processing. These efforts often look very different from mainstream AI and are sometimes presented as radical alternatives.

In practice, many of these systems still inherit model-centric assumptions. They replace one type of model with another, but intelligence is still treated as something learned through training and expressed through inference.

Biological inspiration is often aesthetic rather than structural. Neural terminology is used, but the deeper properties of biological intelligence, such as long-term state, persistence, and energy-aware dynamics, are only partially captured.

These approaches matter, especially at the hardware and efficiency level. But on their own, they do not always escape the idea that intelligence is something computed, rather than something that emerges from a system’s ongoing behaviour.

Substrate-first systems

A smaller and more deliberate group begins somewhere else entirely. Instead of starting with models, they start with the system.

In these approaches, intelligence is not assumed to exist upfront. It is allowed to emerge from the interaction of memory, state, time, and constraint. The substrate is designed first, and behaviour is observed rather than prescribed.

Models may still exist, but they are secondary. They are tools that operate within the system, not the source of intelligence itself. What matters is that behaviour unfolds over time and carries its own history forward.

This shift has deep consequences. Stability, energy use, control, and scalability are addressed at the foundation rather than patched on later. Intelligence becomes something the system does consistently, not something it performs episodically.

These three paths are not mutually exclusive, and future systems may combine elements of all of them. But they represent very different answers to a single question.

Where does intelligence actually live?

From here, the differences between cognition-first and substrate-first approaches become clearer, because they sit on opposite sides of that question.

Cognition-First Synthetic Intelligence

One major direction within Synthetic Intelligence starts from a simple belief. Intelligence is primarily about knowledge, understanding, and reasoning. If a system can form internal models of the world and reason over them coherently, intelligent behaviour will follow.

This way of thinking places cognition at the centre. Intelligence is defined by what the system knows, how it represents that knowledge, and how it reasons about itself and its environment.

In cognition-first approaches, intelligence is treated as an internal phenomenon before it is a behavioural one.

Intelligence as understanding

Cognition-first systems focus on building internal structure. This may include world models, symbolic or semi-symbolic representations, recursive reasoning loops, or explicit self-models. The aim is to give the system a coherent picture of reality and the ability to reflect on that picture.

Behaviour is expected to improve as understanding improves. If the system reasons better, it should act better. If it has a clearer internal model, it should make fewer mistakes.

This mirrors how humans often think about intelligence. We associate being intelligent with knowing things, reasoning about them, and being able to explain our conclusions.

How cognition-first systems are built

In practise, these systems are usually model-centric. They rely on one or more models that perform reasoning, inference, or abstraction. Additional layers are added to support memory, planning, or self-reflection.

The underlying substrate is typically treated as a given. Compute, memory, and execution are assumed to be reliable and interchangeable. The focus stays firmly on the cognitive layer and its internal coherence.

Memory, when present, is often something the system reasons about rather than something it is shaped by. Time is usually handled through iteration rather than persistence.

This makes cognition-first systems relatively easy to reason about and communicate. Their internal processes can often be described in human terms, even if the implementation is complex.

What problem this approach is trying to solve

Cognition-first Synthetic Intelligence is largely a response to the limits of pattern-based AI. It aims to address issues like shallow understanding, brittle reasoning, and the gap between fluent output and genuine comprehension.

At its core, it is trying to answer a specific question.

How does a system know what it knows?

By focusing on internal representations and reasoning, these approaches aim to produce systems that are more explainable, more self-aware, and more aligned with human concepts of understanding.

Examples in the ecosystem

Several organisations and research efforts explore this direction. One example is New Sapience, which focuses on self-modelling and recursive cognition as the foundation of intelligence.

There is also a broader body of academic work on cognitive architectures, machine self-awareness, and reflective systems that fits naturally into this category.

While implementations vary, they share a common assumption. Intelligence emerges from internal understanding and coherent reasoning.

Strengths and limits of cognition-first approaches

Cognition-first systems have clear strengths. They align well with human notions of intelligence. They are often easier to interpret and explain. They offer promising routes towards reasoning, reflection, and conceptual safety.

They also carry limitations. Many assume that intelligence must resemble human cognition. The substrate is rarely questioned. Behavioural stability, energy efficiency, and long-term persistence are often secondary concerns.

Most importantly, cognition-first approaches tend to assume that a suitable substrate already exists.

That assumption leads directly to the next question. What if intelligence does not begin with cognition at all, but with the system it runs on?

That is where the substrate-first view begins.

Substrate-First Synthetic Intelligence

Another direction within Synthetic Intelligence starts from a very different assumption. Intelligence does not begin with knowledge or reasoning. It begins with the system itself.

In substrate-first approaches, intelligence is treated as something that emerges from how a system is built, constrained, and allowed to evolve over time. Behaviour comes first. Understanding, if it appears at all, comes later.

This shifts the focus away from cognition and towards dynamics.

Intelligence as behaviour under constraint

From a substrate-first perspective, intelligence is defined less by what a system knows and more by how it behaves. Can it remain stable over time? Can it adapt without collapsing? Can it respond meaningfully to change without needing to recompute everything from scratch?

In this view, intelligence is not something a system reasons about internally. It is something that shows up in its ongoing interaction with the world.

Memory, state, and time are not supporting features. They are central. Behaviour unfolds across history rather than being regenerated on demand.

How substrate-first systems are built

Substrate-first systems begin with an engineered foundation. This foundation defines how state persists, how memory accumulates, and how constraints shape behaviour. The goal is not to encode intelligence directly, but to create conditions where intelligent behaviour can emerge naturally.

Models may still be used, but they are not the source of intelligence. They operate within the system rather than defining it. What matters most is how the system behaves when nothing explicit is telling it what to do.

The substrate is not treated as interchangeable or abstract. Its properties matter. Small changes at this level can radically alter long-term behaviour.

This makes substrate-first systems harder to design, but also harder to fake. Behaviour has to be earned through structure, not simulated through inference.

What problem this approach is trying to solve?

Substrate-first Synthetic Intelligence is aimed at problems that model-centric systems struggle to address.

These include long-term stability, energy efficiency, persistent memory, and predictable behaviour under constraint. Rather than patching these issues at higher layers, substrate-first approaches try to resolve them at the foundation.

At its core, this direction asks a different question.

What needs to exist for intelligence to arise at all?

This reframing moves the conversation away from performance and towards durability.

Examples in the ecosystem

A small but growing number of efforts focus on this direction. One example is Qognetix, which approaches intelligence as an engineered substrate where behaviour emerges from system dynamics rather than from cognitive reasoning layers.

There is also related work in memory-centric computation, time-dependent systems, and biophysically inspired dynamics that prioritise persistence and constraint over representation.

What these efforts share is a belief that intelligence cannot be safely layered on top of an unstable foundation.

Strengths and limits of substrate-first approaches

Substrate-first systems offer several advantages. They address energy use and stability at the root. They support long-term behaviour without constant recomputation. They avoid assuming that intelligence must look human in order to be real.

They also have limits. Their behaviour can be harder to explain in human terms. Evaluation is less straightforward. Progress can appear slower because it is measured in stability rather than spectacle.

Most importantly, substrate-first approaches delay cognition rather than rejecting it. They assume that higher-order reasoning, if it emerges, must rest on a stable system beneath it.

This sets up a clear contrast with cognition-first approaches, not as a rivalry, but as a difference in sequencing.

That contrast is where the real distinction between Synthetic Intelligence approaches becomes unavoidable.

Cognition-First and Substrate-First Compared

At this point, the difference between cognition-first and substrate-first Synthetic Intelligence is no longer a matter of style or emphasis. It is a difference in where intelligence is believed to originate.

Both approaches aim to move beyond the limits of today’s AI. They do so by answering a very different underlying question.

One asks how a system can know and reason. The other asks what kind of system can behave intelligently at all.

Two different starting points

Cognition-first systems begin with the assumption that intelligence lives in internal understanding. If a system can build coherent models of the world and reason over them, intelligent behaviour should follow.

Substrate-first systems begin elsewhere. They assume that intelligence is a property of the system’s construction. Behaviour emerges from memory, time, and constraint, and only later, if at all, becomes something that can be reasoned about.

This single difference shapes everything that follows.

Knowledge versus behaviour

In cognition-first approaches, intelligence is often evaluated by what the system knows. Can it explain its reasoning? Can it reflect on its own state? Can it build consistent internal representations?

In substrate-first approaches, intelligence is evaluated by what the system does. Does it remain stable over time? Does it adapt without breaking? Does its behaviour remain bounded under stress?

One approach values understanding. The other values reliability.

The role of models

Models sit at the centre of cognition-first systems. They perform reasoning, abstraction, and inference. As requirements grow, models tend to grow with them.

In substrate-first systems, models are optional. They may exist, but they are not the source of intelligence. They operate within the system rather than defining it.

This difference matters because models introduce assumptions, while substrates introduce constraints. Assumptions can be violated. Constraints shape behaviour even when nothing is being explicitly reasoned about.

Memory, time, and persistence

Cognition-first systems often treat memory as something to be accessed. Time is handled through repeated cycles of reasoning. State is something the system reasons about.

Substrate-first systems treat memory as something the system is made of. Time is continuous. State persists and carries history forward naturally.

This makes substrate-first systems better suited to long-running intelligence, where behaviour depends on accumulated experience rather than repeated recomputation.

Scaling and energy

Cognition-first systems tend to scale by adding complexity. More parameters. More layers. More reasoning depth.

Substrate-first systems aim to scale by improving efficiency at the foundation. Better dynamics. Better use of memory. Less recomputation.

Over time, this difference has serious implications for energy use, cost, and feasibility.

Interpretability and control

Cognition-first systems are often easier to explain in human terms. Their reasoning can be traced, even if imperfectly. This makes them attractive for tasks where explanation matters.

Substrate-first systems are often harder to narrate. Behaviour may be clear, but the internal story is less familiar.

At the same time, control does not always follow explanation. Systems that are harder to describe can still be easier to constrain, because limits are built into the substrate itself.

Not a competition, but a sequencing problem

It is tempting to frame these approaches as competitors. In reality, they operate at different layers.

Cognition-first approaches explore how intelligence reasons. Substrate-first approaches explore what intelligence depends on.

In any mature system, cognition is likely to sit on top of a stable substrate. The reverse is far harder to make work.

Understanding this sequencing is key to understanding where Synthetic Intelligence is likely to go next.

That leads to a broader question. If these approaches are not in conflict, how might they fit together over time?

Why These Approaches Are Complementary

It is easy to treat cognition-first and substrate-first Synthetic Intelligence as competing visions. They are often discussed that way, especially when progress is framed in terms of visible capability or short-term results. In practise, they address different layers of the same problem.

The key is not choosing between them, but understanding how they relate.

Intelligence has layers

Intelligence does not appear all at once. In natural systems, behaviour emerges before reasoning. Stability comes before reflection. Memory exists before meaning.

Substrate-first approaches focus on these lower layers. They are concerned with persistence, dynamics, and constraint. Cognition-first approaches focus on higher layers, where understanding, abstraction, and reasoning become possible.

Seen this way, the two approaches are not opposites. They are stacked.

A question of order, not belief

Many disagreements in this space are really about sequencing.

Cognition-first approaches often assume that a suitable substrate already exists. If that assumption holds, building reasoning and understanding on top can make sense.

Substrate-first approaches question that assumption. They argue that without a stable foundation, higher-level cognition will always be brittle, no matter how sophisticated it appears.

Both positions can be valid, but not at the same stage of development.

Lessons from biological systems

Biological intelligence offers a useful reference point. Neurons do not reason. They fire. Networks stabilise before they understand. Cognition emerges from dynamics, not the other way around.

This does not mean artificial systems must copy biology. It does suggest that intelligence depends on structure before it depends on meaning.

Substrate-first approaches take this lesson seriously. Cognition-first approaches explore what becomes possible once that structure is in place.

Likely paths of integration

Over time, mature Synthetic Intelligence systems are likely to combine both perspectives.

A stable, engineered substrate provides persistence, control, and efficiency. On top of that, cognitive layers can emerge that reason, reflect, and model the world.

Seen this way, cognition-first work is not wasted. It is simply premature without the right foundation.

Why this framing matters

Treating Synthetic Intelligence as a zero-sum competition between philosophies slows progress. It encourages premature abstraction and rewards impressive demos over durable systems.

Seeing the field as layered allows different efforts to contribute without talking past each other. It also makes clearer which problems must be solved first.

That framing leads naturally to the long-term question. If foundations matter this much, what happens when they are chosen badly, or too quickly?

That question brings us to the role of substrate over time.

Why Substrate Matters Long Term

The reason substrate-first Synthetic Intelligence matters is not because it promises faster breakthroughs or more impressive demonstrations. It matters because substrate choices are hard to undo.

Once a system’s foundations are set, everything built on top of them inherits those decisions. Over time, those inherited assumptions become constraints of their own.

Foundations create lock-in

In the history of computing, the most consequential decisions were rarely about applications. They were about instruction sets, memory models, execution rules, and where boundaries were drawn between hardware and software.

Those choices shaped what was possible decades later.

Synthetic Intelligence is approaching a similar moment. Decisions made at the substrate level will determine how systems scale, how controllable they are, and how much complexity must be layered on later to compensate for early shortcuts.

Once a field commits to a particular foundation, changing direction becomes expensive, slow, and politically difficult.

Scaling is not just doing more of the same

Much of modern AI treats scaling as repetition. More data. More parameters. More compute. This has worked, but it also hides a cost. Intelligence becomes something that must be recomputed continuously rather than something that persists.

Substrate-first thinking reframes scaling. The focus shifts to efficiency, stability, and reuse. Better dynamics instead of larger models. Memory that carries forward instead of inference that starts from zero.

This matters because intelligence that only scales through volume eventually hits real limits. Energy. Cost. Infrastructure. Environmental impact.

Substrate decisions determine whether those limits arrive early or can be pushed far into the future.

Energy is a first-order concern

Energy is often treated as an optimisation problem to be solved later. At the substrate level, it cannot be deferred.

Systems that rely on constant inference consume energy continuously. Systems that rely on persistence and state can trade activity for structure. Intelligence becomes something that is maintained rather than constantly regenerated.

This is not a moral argument about sustainability. It is a practical one about whether Synthetic Intelligence can operate at scale without collapsing under its own requirements.

Control comes from constraints, not explanations

There is a common assumption that understanding a system’s reasoning is the same as controlling its behaviour. In practise, control comes from limits built into the system itself.

Substrate-first systems aim to make behaviour predictable by design. Constraints define what is possible. Dynamics shape how behaviour unfolds. Stability is engineered, not hoped for.

This changes how risk is managed. Instead of asking why a system did something after the fact, the question becomes under what conditions it could have done something else.

The cost of getting foundations wrong

When foundations are weak, complexity accumulates above them. Safety mechanisms multiply. Cognitive layers become more elaborate. Behaviour becomes harder to reason about, not easier.

History suggests that retrofitting fundamentals rarely works well. Early shortcuts tend to demand permanent compensation later.

Synthetic Intelligence forces a choice. Either foundations are treated as an engineering discipline, or they are treated as an implementation detail and paid for later.

Substrate as a discipline

Treating substrate seriously changes how progress is measured. Stability matters more than benchmarks. Behaviour over time matters more than short-term performance. Durability matters more than novelty.

This can make progress look slower. In reality, it is progress that compounds.

With that in mind, the final question is not about which approach wins. It is about how this field should be understood at all.

Synthetic Intelligence Is a Discipline, Not a Product

Synthetic Intelligence is often discussed as though it were a single breakthrough waiting to happen. A model. A system. A moment. In reality, it is better understood as the early formation of a new discipline.

Disciplines are not defined by demos or product launches. They are defined by the problems they take seriously and the foundations they choose to build on.

Moving beyond labels and rebrands

As interest in Synthetic Intelligence grows, the term will inevitably be used in different ways. Some will apply it to new architectures. Others will use it to reframe existing systems. That is normal in the early stages of any field.

What matters is not the label, but the assumptions underneath it. Are we building intelligence by stacking more abstraction on top of fragile foundations, or by engineering systems that can sustain intelligent behaviour over time?

Synthetic Intelligence, at its core, is a commitment to the second path.

Intelligence is not something we add later

One of the clearest lessons emerging from this space is that intelligence cannot be safely bolted on. Whether framed as reasoning or behaviour, intelligence reflects the structure of the system beneath it.

When foundations are improvised, higher layers must compensate indefinitely. Complexity grows. Control weakens. Stability becomes harder to guarantee.

Synthetic Intelligence shifts the focus away from optimising outputs and towards engineering the conditions under which intelligence can emerge and persist.

Why this moment matters

This field is still young. Substrates are not standardised. Evaluation methods are still evolving. Long-term consequences are not yet fully priced into decisions.

That means there is still room to choose carefully.

History shows that the most important choices are often the least visible at the time. By the time applications dominate the conversation, foundational decisions have already been locked in.

A more useful question

Rather than asking when machines will become intelligent, a more useful question is what must be true for intelligence to arise, remain stable, and stay controllable.

That question does not point to a single company or roadmap. It points to a discipline that is still being formed, through careful engineering rather than rapid iteration.

Closing thought

Synthetic Intelligence will not be defined by who moves fastest. It will be defined by who understands what cannot be changed later.

In that sense, the most important work in this space is not about intelligence itself, but about the structures that make intelligence possible at all.

For a foundational definition of Synthetic Intelligence and its origins, see our explainer here.